The Digital Puzzle That Captivated Cybersecurity

Back in late 2025, something fascinating happened in the cybersecurity community. Raw data from the Jeffrey Epstein case—specifically, base64-encoded email attachments—started circulating. These weren't your typical leaked documents. They were the raw building blocks of documents, encoded in a format most people only encounter in passing. The challenge? Reconstructing the original, uncensored PDFs from what looked like gibberish to the untrained eye.

I remember first seeing the discussion on r/cybersecurity. The post had over 8,000 upvotes and hundreds of comments from professionals, hobbyists, and curious onlookers. Everyone wanted to know: How do you actually turn this encoded data back into readable documents? And more importantly, what does this process teach us about data persistence, forensic analysis, and the nature of "deleted" information in our digital age?

This isn't just about one high-profile case. The techniques discussed here apply to countless forensic investigations, data recovery scenarios, and security audits. Whether you're a professional investigator or just someone curious about how data works beneath the surface, understanding this process reveals fundamental truths about our digital world.

Understanding Base64: More Than Just Random Characters

Let's start with the basics, because I've found even experienced tech folks sometimes misunderstand what base64 actually is. It's not encryption—that's the first and most important distinction. Base64 is an encoding scheme, a way to represent binary data using only ASCII characters. Think of it like translating a book into Morse code. The information is all there, just in a different format that's safer to transmit through systems that might choke on raw binary.

Email systems have used base64 for attachments for decades. When you send a PDF via email, your email client converts that binary file into a long string of letters, numbers, plus signs, and slashes. That's what gets transmitted. The recipient's email client then decodes it back into the original binary file. The Epstein documents that leaked weren't the PDFs themselves, but these encoded email attachments—the intermediate format.

Here's what makes this interesting from a forensic perspective: base64 encoding adds about 33% overhead. A 1MB file becomes roughly 1.33MB when base64-encoded. But more importantly, the encoding is completely reversible without any key or password. If you have the complete encoded string and know it's base64 (which is usually obvious from the character set), you can reconstruct the original file perfectly. Every single bit.

The Technical Process: From String to Document

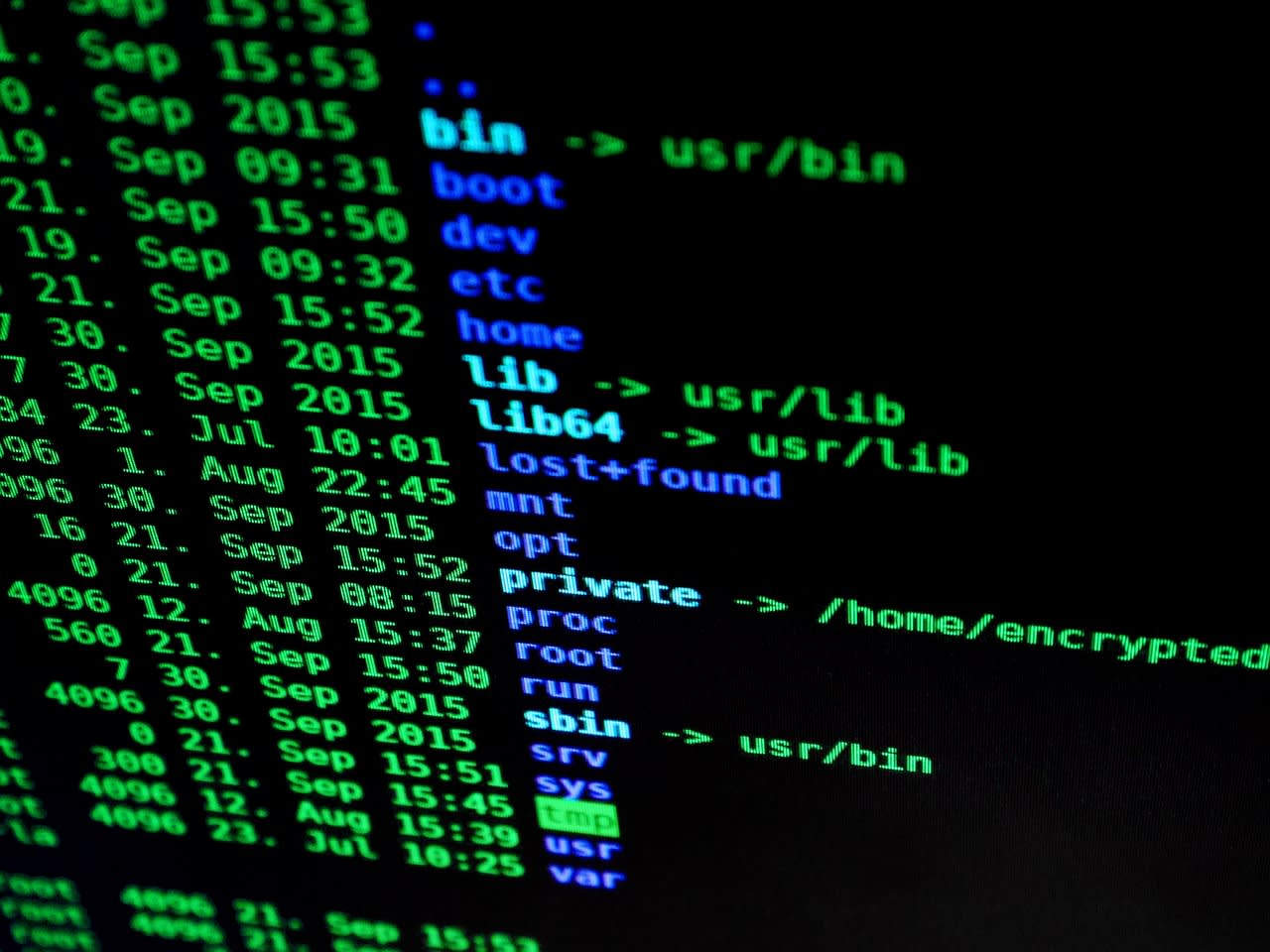

So how did people actually do this reconstruction? The process is surprisingly straightforward once you understand the steps. Most of the discussion on Reddit centered around using command-line tools—the kind of approach that feels like digital archaeology.

First, you need to extract the base64 string from wherever it's stored. In the Epstein case, these were often in email dumps or database exports. The string typically starts after a header like Content-Transfer-Encoding: base64 and continues until the next boundary or section. Sometimes you get clean data; other times, you need to strip out line breaks, remove MIME headers, or clean up corruption from the extraction process.

Once you have a clean base64 string, the decoding is one command away. On Linux or macOS, you'd use:

base64 -d encoded_string.txt > output.pdfOr if you're using Python (which many Reddit commenters preferred for handling messy data):

import base64

with open('encoded.txt', 'r') as f:

encoded = f.read()

decoded = base64.b64decode(encoded)

with open('reconstructed.pdf', 'wb') as f:

f.write(decoded)The real challenge isn't the decoding itself—it's handling imperfect data. Several commenters mentioned issues with character encoding problems, missing chunks, or corrupted sections. One user spent hours trying to figure out why their PDF wouldn't open, only to discover their text editor had "helpfully" converted line endings, breaking the base64 encoding.

Forensic Implications: When "Deleted" Isn't Really Deleted

This is where things get really interesting from a cybersecurity perspective. The Epstein PDF reconstruction demonstrates something crucial: data persists in unexpected places. Even if the original PDFs were "deleted" from servers, the base64 representations lived on in email systems, backups, and logs.

Think about your own organization. How many emails containing base64-encoded attachments are sitting in your mail server logs? In backup tapes? In archived databases? From a forensic investigator's viewpoint, this is gold. Email systems often retain these encoded attachments long after the original files have been purged from file servers.

I've worked on investigations where we recovered years-old documents precisely this way. A company might have deleted sensitive financial reports from their SharePoint server, but those same reports were emailed to executives—and the base64 versions were still in the email journaling system. The legal implications are significant. In litigation or investigations, parties are often required to produce all relevant documents. If they only delete the "original" files but forget about encoded versions in emails, they haven't actually complied with their obligations.

Security Concerns: The Double-Edged Sword of Encoded Data

Reading through the Reddit comments, I noticed a recurring concern: security teams often overlook base64-encoded data. Most data loss prevention (DLP) systems focus on scanning native file formats. They might flag a PDF containing social security numbers, but would they catch those same numbers in a base64-encoded email attachment?

Probably not—unless the DLP system is specifically configured to decode and scan base64 content. And here's the kicker: base64 isn't the only encoding scheme out there. There's base32, uuencode, hexadecimal representations, and countless custom encoding methods. Sophisticated data exfiltration attempts often use encoding to bypass security controls.

But there's another side to this coin. Base64 encoding can actually improve security in some contexts. Because it converts binary to text, it allows binary data to pass through text-only systems safely. It prevents issues with character encoding corruption. And it's a standard, well-understood format that every system can handle predictably. The security risk isn't base64 itself—it's forgetting that base64-encoded data contains real, sensitive information that needs protection.

Practical Applications Beyond High-Profile Cases

You might be thinking, "This is interesting, but I'm not investigating billionaires. How does this apply to me?" More than you'd expect. Let me give you some real scenarios I've encountered:

First, data recovery. I once helped a small business recover their only copy of a critical contract. The original Word document was corrupted, but someone had emailed it to a client six months earlier. We pulled the base64 attachment from their email server logs and reconstructed a perfect copy. Total time: about 15 minutes. The alternative would have been expensive forensic recovery or renegotiating the contract.

Second, system migrations. When moving between email systems or document management platforms, encoded attachments sometimes get "stuck" in intermediate formats. Knowing how to recognize and reconstruct them saves countless headaches.

Third, security auditing. As part of penetration tests, I often look for base64-encoded data in unexpected places: log files, database fields, even environment variables. You'd be surprised how often credentials, API keys, or sensitive configuration data get base64-encoded and then forgotten about.

Tools and Techniques for Modern Forensic Work

In 2026, we have better tools than ever for this kind of work. While the command-line approach works perfectly fine, several Reddit commenters mentioned more sophisticated options. Forensic platforms like Autopsy and FTK now include built-in base64 detection and decoding. They'll automatically scan for encoded data and reconstruct files as part of their standard processing.

For larger-scale analysis—say, processing thousands of emails from an entire organization—you might need automation. This is where tools like Apify's web scraping and automation platform can be surprisingly useful. While typically used for web data extraction, their infrastructure can be adapted to process large volumes of encoded data systematically. I've set up workflows that extract base64 attachments from email exports, decode them, run them through document analysis pipelines, and categorize the results—all automated.

If you're dealing with particularly messy or corrupted data, specialized hex editors can help. Synthexx Hex Editor tools let you manually examine and repair binary files at the byte level. Sometimes the issue isn't with the base64 decoding itself, but with corruption in the source data that needs manual fixing.

For teams without in-house expertise, hiring a digital forensics specialist on Fiverr can be a cost-effective way to handle one-off reconstruction projects. Many freelancers specialize in exactly this kind of data recovery work.

Common Pitfalls and How to Avoid Them

Based on the Reddit discussion and my own experience, here are the most common mistakes people make when working with base64-encoded data:

Character encoding issues top the list. When you copy base64 text between systems or applications, invisible changes can occur. Line endings might convert (CRLF to LF or vice versa). Spaces might get added or removed. Some text editors "helpfully" convert to UTF-8, corrupting the data. Always work with raw binary/text editors when possible, and verify your source data hasn't been altered.

Missing or incorrect headers is another frequent problem. Base64-encoded email attachments usually include MIME headers that aren't part of the actual base64 data. If you try to decode the headers along with the data, you'll get errors. You need to isolate exactly where the base64 content begins and ends.

Assuming all base64 is the same can trip you up. There are actually several variants: standard base64, base64url (used in URLs), and others with slightly different character sets. Most decoding tools handle the common variants, but if you're working with data from an unusual source, you might need to specify the exact format.

Finally, forgetting about the original file's integrity. Just because you successfully decode base64 doesn't mean the resulting file is perfect. The original might have been corrupted before encoding. Always verify reconstructed files actually open and contain what you expect.

Ethical Considerations and Responsible Disclosure

The Epstein case raises important ethical questions that several Reddit commenters grappled with. When you have the technical ability to reconstruct sensitive documents, should you? What are your responsibilities?

In professional forensic work, we operate under strict protocols. We only examine data we're authorized to access. We maintain chain of custody. We protect sensitive information. The same principles should apply even in personal or research contexts. Just because you can reconstruct something doesn't mean you should—especially if it involves private personal information, classified material, or data subject to legal restrictions.

If you do encounter sensitive data during legitimate work, responsible disclosure matters. Don't publish personally identifiable information. Don't share documents that could harm innocent people. The cybersecurity community generally respects these boundaries, and the Reddit discussion reflected that maturity. Most commenters focused on the technical process rather than the content of the documents themselves.

The Future of Data Persistence and Recovery

Looking ahead to 2026 and beyond, what does the Epstein PDF reconstruction teach us about where data forensics is heading? A few trends stand out.

First, data is becoming more fragmented. Files don't exist in one place anymore. They're split across cloud services, encoded in logs, cached locally, backed up in multiple formats. Forensic investigations increasingly involve piecing together these fragments—exactly what happened with the base64 reconstructions.

Second, automation is essential. Manual reconstruction might work for a few files, but at scale, you need automated tools that can identify, extract, and decode encoded data across petabytes of storage. Machine learning is starting to help here, with systems that can recognize encoded patterns even without explicit markers.

Third, privacy and security are in tension. The same techniques that recover important evidence can also invade privacy. The same encoding methods that safely transmit data can also hide exfiltrated information. As professionals, we need to navigate these tensions carefully, with clear ethical frameworks.

Putting Knowledge Into Practice

So what should you do with this information? Start by looking at your own systems. Check if your security tools scan base64-encoded content. Review your data retention policies—do they account for encoded versions of documents? Consider running a test: take a sample document, email it internally, and see if you can reconstruct it from your mail logs a month later.

Build the skill. Set up a test environment with some base64-encoded data and practice reconstruction. The technical process is simple enough that any IT professional should be comfortable with it. But like any skill, it's better to learn before you need it urgently.

Most importantly, change your mindset about what constitutes "data." It's not just files in folders. It's encoded strings in databases. It's attachments in email journals. It's cached copies in unexpected places. The Epstein PDF reconstruction reminds us that in our digital world, information has a way of persisting—often in forms we don't immediately recognize.

The next time you see a wall of seemingly random characters in a log file or database dump, pause. Look closer. That gibberish might be the key to recovering something important, understanding a security incident, or piecing together a digital history. And now you know exactly what to do with it.