The Breaking Point: When Platforms Demand Your Face

It starts with a simple notification. "Verify your age to continue." You're trying to watch a gaming tutorial, a documentary, or maybe just some comedy that happens to be marked 18+. The platform—YouTube, Roblox, TikTok—wants either government ID or a facial scan. No verification, no access. For the Reddit user who sparked this discussion, that moment came when YouTube flagged two accounts as "underage" based on viewing habits that included speedrunning content. The AI decided speedrunning was "for children," and suddenly, watching anything marked mature required surrendering biometric data or personal documents.

This isn't an isolated incident. It's happening across platforms, and privacy advocates are hitting their limit. The response? A growing boycott of any non-essential service demanding ID verification. But what does this movement look like in practice? And more importantly—can it actually work?

Why Verification Demands Are Exploding in 2026

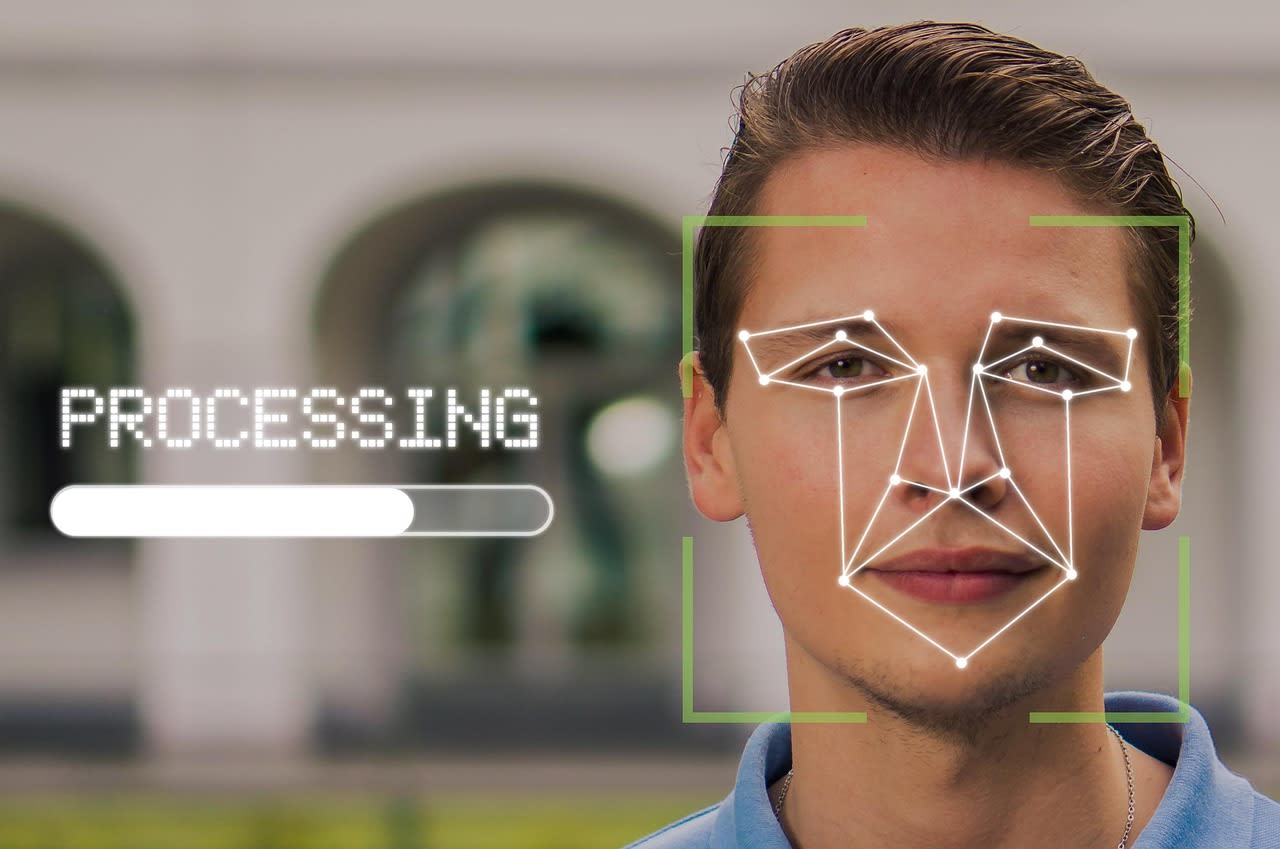

First, let's understand why we're here. The push for age verification isn't coming from nowhere. Governments worldwide have been passing laws like the UK's Online Safety Act and various US state regulations that technically require platforms to verify users' ages for certain content. The platforms' response has been to implement the most invasive verification methods possible—not because they're legally required to use facial recognition or ID scans (they're often not), but because these methods serve multiple purposes.

Think about it from their perspective. Once they have your verified identity, they can:

- Tie all your online activity to your real-world identity

- Create more valuable advertising profiles

- Prevent users from creating multiple accounts

- Build datasets for training their AI systems

- Comply with various regulations in one sweeping move

It's convenient for them. But for users? It's a privacy nightmare. And the creep is real. What starts with "age verification for adult content" quickly expands to verification for commenting, for uploading, for accessing certain features. Before you know it, you need to scan your face just to use basic platform functions.

The Real Risks Beyond "Just Showing ID"

When people dismiss these concerns with "what's the big deal?" they're missing several critical points. First, data breaches happen constantly. In 2025 alone, there were 3,800 publicly reported breaches exposing over 8 billion records. Your facial scan or ID document sitting in yet another corporate database is just another target.

Second, mission creep is inevitable. That facial recognition data collected for "age verification" gets repurposed. Maybe for emotion detection in ads. Maybe for identifying you across platforms. Maybe sold to data brokers who combine it with other information. You lose control the moment you hand it over.

Third, false positives and algorithmic bias create real problems. Like the Reddit user whose interest in speedrunning triggered YouTube's "underage" flag. These systems make mistakes constantly. One study found facial recognition systems are up to 34% less accurate for people with darker skin tones. When verification becomes mandatory, these errors lock legitimate users out of services they've paid for or depended on.

And finally, there's the normalization effect. Each time we accept these demands, we set a precedent. We tell companies this level of intrusion is acceptable. We make it harder to push back next time.

What Actually Counts as "Non-Essential"?

The original post makes an important distinction: boycotting non-essential services. This is crucial because being completely off-grid in 2026 is nearly impossible for most people. Your bank will need to verify your identity. Your employer's systems might require it. Government services certainly will.

But your video streaming platform? Your gaming account? Your social media? That's where you draw the line. Here's my personal framework:

Essential: Banking, healthcare portals, government services, employment systems, utilities. These get a pass because alternatives don't exist or come with severe life consequences.

Non-essential: Entertainment platforms, social media, gaming services, shopping sites (except for age-restricted purchases), news sites, forums. These are where you push back.

The tricky part comes with services that straddle the line. Email, for instance. The original poster still uses Gmail despite boycotting other Google services. That's a practical compromise many make—recognizing that switching email providers comes with significant friction while still refusing to verify for YouTube.

Practical Alternatives: What to Use Instead

Okay, so you're boycotting YouTube because they want your face. What do you actually do instead? The good news is that alternatives exist for nearly every service demanding verification.

For video content, consider:

- PeerTube instances: Federated, open-source video hosting

- Odysee: Blockchain-based YouTube alternative

- Invidious instances: Front-ends that let you watch YouTube content without an account

- Specialized platforms: Many creators now cross-post to platforms like Floatplane or Nebula

For gaming platforms like Roblox that demand verification:

- Open-source alternatives: Games like Minetest (Minecraft alternative) or open-source game engines

- DRM-free stores: GOG.com for PC games

- Local multiplayer or self-hosted game servers

The key is accepting that you might need to use multiple platforms instead of one monolithic service. It's less convenient, absolutely. But convenience is what got us into this privacy mess in the first place.

The Technical Workarounds (And Their Limits)

Some tech-savvy users try to bypass verification systems. Using VPNs to appear in jurisdictions without verification laws. Creating accounts with "verified" ages before the requirements hit. Using privacy-focused browsers with strong fingerprinting protection.

These can work—temporarily. But platforms are getting smarter. They're implementing device fingerprinting, requiring phone verification as a backup, or using behavioral analysis to detect evasion attempts. The arms race favors the platforms with their billions in development resources.

That's why the boycott approach is fundamentally different. It's not about tricking the system. It's about refusing to participate. It's voting with your attention and your wallet. And when enough people do it, platforms notice.

When Verification Might Actually Be Worth It

Let's be honest—there are edge cases. Say you're a content creator whose livelihood depends on a platform. Or you need to access verified information for work. Or you're part of a community that only exists on one platform.

In these cases, if you must verify, do it as safely as possible:

- Use the most minimal verification option (if they offer age estimation without ID, try that first)

- Use a dedicated email address not tied to your real identity

- Consider using a virtual phone number if phone verification is required

- Use the platform in a privacy-focused browser or container

- Regularly review what data the platform has collected about you

But even then, ask yourself: Is there truly no alternative? Could you migrate your community? Could you diversify your income streams? The more dependent we are on any single platform, the more power they have to impose these requirements.

How to Make Your Boycott Actually Matter

Individual boycotts feel symbolic. Collective action creates change. Here's how to make yours count:

First, tell the platforms why you're leaving. When you cancel a subscription or delete an account, use the feedback option. Be specific: "I'm leaving because you require facial recognition for age verification." Metrics matter to these companies. If enough people cite privacy as their reason for churn, product teams notice.

Second, support alternatives. Use them, pay for them if they have paid tiers, recommend them to friends. The biggest barrier to privacy-friendly alternatives is network effects. They need users to thrive.

Third, talk about it. Like the Reddit user who started this discussion. Share your experiences. Normalize pushing back. When friends complain about verification demands, suggest alternatives instead of just sympathizing.

Fourth, consider the legal angle. In some jurisdictions, you can file data protection complaints. GDPR in Europe, for instance, requires that data collection be minimized. Arguably, facial recognition for age verification isn't minimal when less invasive options exist.

The Future: Where This Is Headed in 2026 and Beyond

The trend is clear: more verification, not less. The next battleground will be "identity verification for safety"—platforms arguing they need to know who you are to prevent harassment, misinformation, or other harms. There's some truth to that argument, which makes it more dangerous.

We're also seeing verification creep into physical spaces. Some concerts now require digital ID verification for tickets. Apartment buildings use facial recognition for entry. The line between online and offline verification is blurring.

The hopeful counter-trend? Decentralized identity systems. Projects using cryptography to prove you're over 18 without revealing your birth date. Or to prove you're a real person without showing your face. These exist in early forms today. Whether they'll gain mainstream adoption before mandatory verification becomes ubiquitous is the real question.

Your Action Plan Starting Today

Feeling overwhelmed? Start small:

- Audit your current services: Make a list of what platforms you use that require or might soon require verification.

- Identify your essentials: Which could you realistically not replace?

- Pick one non-essential to replace this month: Maybe your video streaming service. Maybe a game platform.

- Set up alternatives gradually: Migrate subscriptions, inform contacts, export your data.

- When faced with new verification demands, say no: Even if it means losing access to something you enjoy.

Remember—this isn't about perfection. The original poster still uses Gmail. I still use services that aren't perfect. It's about drawing a line where you can and pushing back against the creep.

The most powerful message we can send isn't through angry posts (though those help). It's through our behavior. When platforms see users leaving rather than complying, when they see growth slowing in regions with strict verification, when they face real financial consequences—that's when policies change.

Your privacy isn't just about hiding. It's about autonomy. It's about deciding what parts of yourself to share, with whom, and on what terms. Every time you refuse to scan your face for a gaming video, you're asserting that autonomy. And in 2026, that assertion matters more than ever.