The New Reality: ICE's Mobile Facial Recognition Units

You're walking down the street, maybe heading to a protest or just going about your day. What you probably don't realize is that ICE agents might be scanning your face from a hundred feet away using nothing more than a smartphone. This isn't some dystopian fiction—it's happening right now in 2026, and the implications are terrifying.

I've been tracking surveillance technology for years, and what ICE is doing represents a fundamental shift in how law enforcement operates. They're not just using stationary cameras anymore. They're taking surveillance mobile, turning public spaces into real-time identification zones. And the worst part? Most people have no idea it's happening until it's too late.

The original NBC News report that sparked the Reddit discussion revealed something crucial: ICE agents are using a tool called Clearview AI on their personal phones. That's right—personal devices. No special equipment needed. Just download an app, and suddenly any agent can identify anyone they point their camera at. It's surveillance democratized, and it's happening without the oversight you'd expect for such powerful technology.

How Mobile Facial Recognition Actually Works

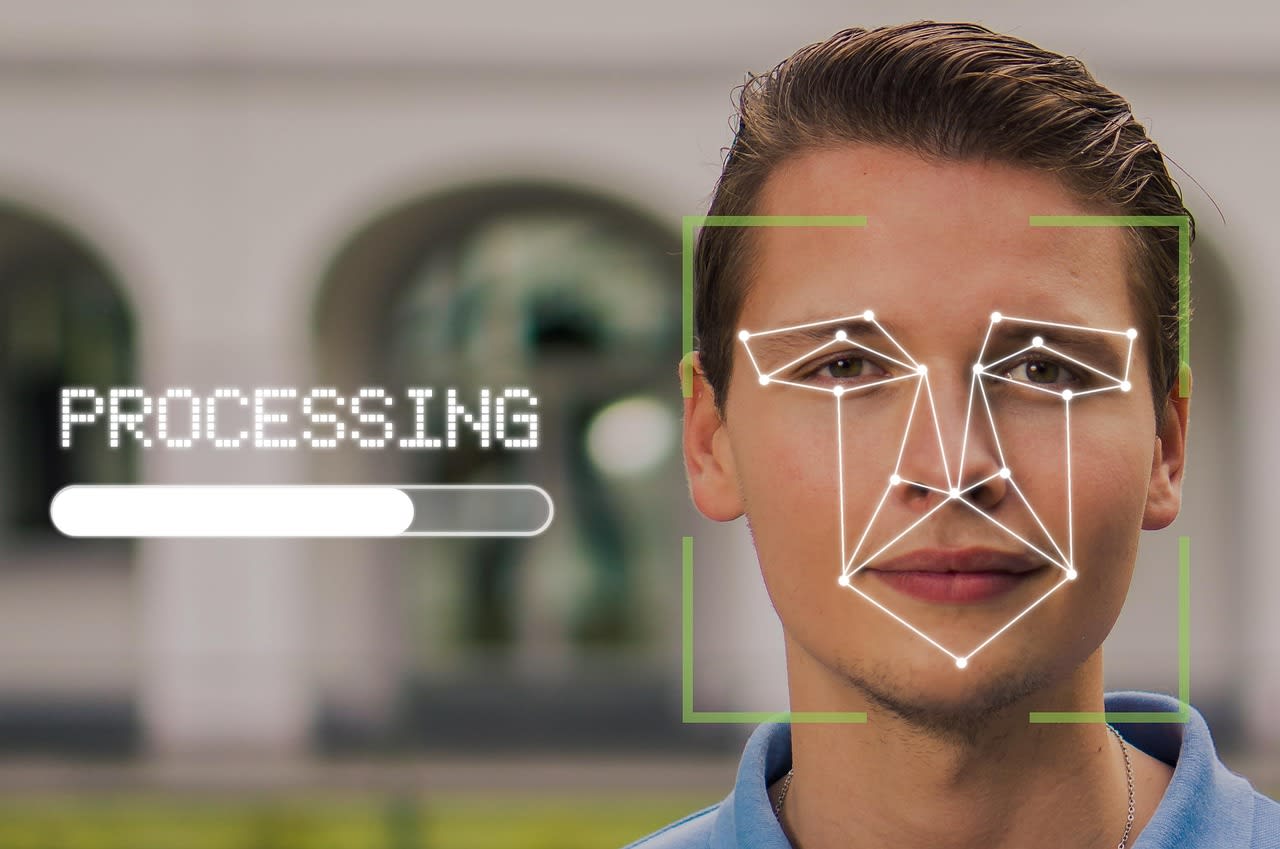

Let me break down how this technology functions in practice, because understanding the mechanics is the first step to understanding the threat. When an ICE agent uses their phone's camera with Clearview AI or similar software, here's what happens:

The app captures your face, converts it into a mathematical representation called a facial signature, and compares it against a massive database of images scraped from social media, government records, and other sources. We're talking billions of images here. The system then returns potential matches with confidence scores, often within seconds.

What makes this particularly concerning is the database sourcing. Clearview AI built its system by scraping images from platforms like Facebook, Instagram, and LinkedIn without user consent. So that profile picture you uploaded years ago? It might be in their database right now, waiting to be matched against a street capture.

And here's something most people don't realize: the technology keeps getting better. In 2026, these systems can identify people from partial faces, at angles, in poor lighting, and even with masks. The accuracy rates that were questionable a few years ago have improved dramatically, making false positives less common but the surveillance more effective.

The Legal Gray Zone ICE Is Exploiting

Now, you might be thinking: "Surely this is illegal, right?" Well, that's where things get complicated—and frankly, alarming. ICE operates in what privacy experts call a "legal gray zone" when it comes to facial recognition.

First, there's the Fourth Amendment issue. Generally, you don't have a reasonable expectation of privacy in public spaces. Courts have ruled that what you do in public can be observed and recorded. But here's the twist: facial recognition technology fundamentally changes the nature of that observation. It's not just someone seeing you—it's a system instantly identifying you, linking you to your entire digital footprint, and potentially flagging you for further action.

Second, ICE often uses this technology during what they call "collateral" encounters. They might be looking for one person but scan everyone in the vicinity. The Reddit discussion highlighted concerns about this dragnet approach—innocent people getting caught in surveillance nets meant for others.

Third, and this is crucial: there's minimal oversight. Unlike wiretaps that require warrants, facial recognition scans often happen without judicial approval. Agents make judgment calls in the field, and those decisions can have life-altering consequences for the people being scanned.

Protest Surveillance: Chilling Free Speech

One of the most disturbing applications discussed in the original thread is protest monitoring. ICE agents have been documented using facial recognition at immigration-related protests and other demonstrations.

Think about what this means for free speech. You're exercising your First Amendment rights, and government agents are secretly identifying you, potentially adding you to watchlists, or using that information in immigration proceedings. The chilling effect is real—people might avoid protests altogether if they fear being identified and targeted.

I've spoken with activists who've noticed ICE presence at their events. They describe agents standing at the periphery, holding phones in a way that suggests they're not just taking photos but actively scanning faces. Sometimes they're obvious; sometimes they blend in. Either way, the message is clear: we're watching, and we know who you are.

This isn't just theoretical. There are documented cases where protest attendees later faced immigration consequences. While direct causation is hard to prove, the timing and circumstances suggest their participation was noted and acted upon.

The Data Trail You Can't Escape

Here's what keeps me up at night: the permanence of these scans. When ICE captures your facial data, where does it go? How long is it stored? Who has access to it?

Based on what we know in 2026, here's the troubling reality: scans often end up in multiple databases. ICE's own systems, Clearview AI's servers (which have suffered data breaches, by the way), and potentially shared with other agencies through information-sharing agreements.

That data doesn't just disappear. Even if you're completely innocent, your facial signature might remain in systems for years, accessible to various government entities. Future scans can then be matched against this historical data, creating a timeline of your movements and associations.

And consider this: facial recognition systems aren't perfect. False matches happen, especially for people of color, who studies show face higher error rates. A false positive could mean detention, questioning, or being added to watchlists based on mistaken identity.

Practical Protection: What Actually Works in 2026

Okay, enough doom and gloom. Let's talk about what you can actually do to protect yourself. I've tested various approaches, and here's what I've found works—and what doesn't.

First, understand that complete anonymity in public is nearly impossible if someone really wants to identify you. But you can make it harder and less reliable.

Clothing and accessories matter more than you might think. Broad-brimmed hats, scarves, and certain types of face masks can interfere with facial recognition algorithms. I'm not talking about medical masks—those don't work well anymore. Look for patterns and shapes that specifically confuse the algorithms. Some companies now make clothing with infrared-reflective materials that confuse cameras, though these can be expensive.

Posture and movement help too. Keeping your head down, avoiding direct eye contact with cameras, and moving in groups can reduce capture quality. It's not foolproof, but it raises the error rate.

Digital hygiene is crucial. The less of your face is online, the smaller the database against which you can be matched. Consider removing facial photos from social media or making profiles private. Yes, this is inconvenient, but it directly reduces your exposure.

Technological Countermeasures That Matter

Beyond physical approaches, there are technological strategies worth considering in 2026.

Privacy-focused browsers and search engines help minimize your digital footprint. I recommend using alternatives to Google and Facebook that don't rely on facial data collection. DuckDuckGo and Startpage are good starting points for search, though they're not perfect solutions.

For social media, consider using pseudonyms and never posting facial photos. I know this sounds extreme, but remember: every facial image online is potential training data for surveillance systems.

There are also apps that claim to detect facial recognition cameras, though in my testing, their reliability varies. They work by identifying camera characteristics and known surveillance locations, but they can't detect every instance, especially mobile units.

If you're technically inclined, you might explore tools that automate the removal of your images from data broker sites. While not specifically for facial recognition databases, reducing your overall online presence helps. Some services offer this as a subscription, or you can learn to do it yourself through various online guides.

Legal Rights and Pushback Strategies

Knowing your rights is essential, even if those rights are being tested by new technology.

If approached by ICE agents, you generally have the right to remain silent and the right to refuse consent for searches. You can ask if you're free to leave. If you're not under arrest, you can walk away. Document everything—agents' badge numbers, what was said, time and location. This creates a record that might be useful later.

Support organizations fighting these practices. The Electronic Frontier Foundation, ACLU, and local privacy rights groups are challenging facial recognition use in courts and legislatures. Their work creates precedents and sometimes leads to restrictions.

Push for local legislation. Several cities have banned government use of facial recognition. While this doesn't affect federal agencies like ICE, it creates pressure and establishes norms. Your city or state might be next.

And here's something practical: learn to recognize surveillance. ICE vehicles often have specific license plate formats. Agents might wear identifiable clothing or equipment. Being aware of your surroundings helps you make informed decisions about when and where to exercise your rights.

Common Misconceptions About Facial Recognition

Let me clear up some misunderstandings I see repeatedly in discussions about this topic.

First, "I have nothing to hide, so I don't need to worry." This misunderstands the fundamental issue. It's not about hiding—it's about autonomy and consent. You should control who identifies you and when, especially when that identification can lead to serious consequences.

Second, "The technology isn't accurate enough to be concerning." That was true five years ago. In 2026, it's dangerously accurate, especially for frontal shots in good lighting. And even inaccurate systems can cause harm through false positives.

Third, "Only criminals need to worry." ICE's mandate includes civil violations, not just criminal ones. You could be completely law-abiding but still face immigration consequences based on scans.

Fourth, "I can just avoid protests and stay safe." Surveillance happens in everyday locations too—outside workplaces, near homes, in public transportation hubs. Avoiding certain activities doesn't guarantee protection.

The Future We're Heading Toward

Looking ahead, the trends are concerning. Facial recognition technology is becoming cheaper, more accurate, and more integrated into everyday law enforcement.

We're likely to see more agencies adopting similar mobile systems. Local police departments, transportation authorities, even private security firms could follow ICE's lead. The normalization of street-level biometric surveillance is already underway.

Integration with other technologies is another concern. Imagine facial recognition combined with automated license plate readers, social media monitoring, and predictive policing algorithms. You'd have a comprehensive surveillance system that tracks movements, associations, and even predicts behavior.

International implications matter too. Other countries are watching how the U.S. handles this. Some authoritarian regimes would love to implement similar systems, and U.S. practices provide justification and technical models.

Taking Back Some Control

So where does this leave us? Overwhelmed? Maybe. But not powerless.

The most important thing you can do is stay informed. Follow developments in surveillance technology and privacy law. The landscape changes rapidly, and what works today might not work tomorrow.

Make conscious choices about your digital presence. Every facial image you put online potentially fuels these systems. Ask yourself if that social media post is worth the privacy trade-off.

Support businesses that respect privacy. Companies that don't use facial recognition, that encrypt data, that push back against government overreach—they need customers to survive and set examples.

And talk about this issue. Most people still don't understand how pervasive facial recognition has become. Share what you know. The Reddit discussion that inspired this article shows how powerful collective awareness can be.

Ultimately, we're facing a fundamental question about the kind of society we want to live in. Do we accept constant identification in public spaces as the price of security? Or do we demand boundaries between individuals and surveillance systems?

In 2026, that question is more urgent than ever. ICE's mobile facial recognition units aren't just a privacy issue—they're a test of our democratic values. And how we respond will shape surveillance for generations to come.